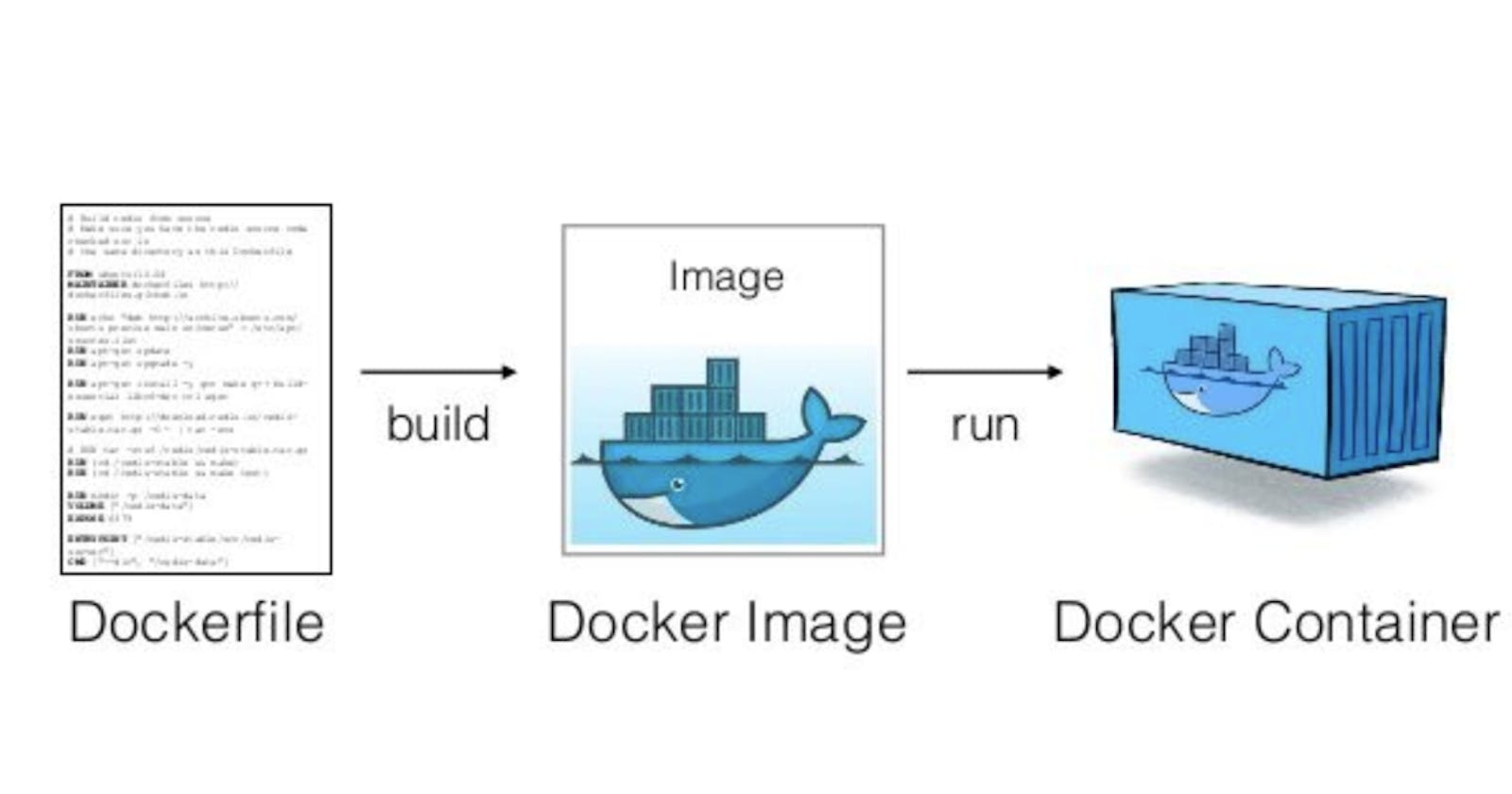

Containers have formed the basis of code packaging for a while in the software industry. Everyone needs a degree of containerization skills to get by daily. An important issue springs up when one tries to run some tasks during the startup of your docker image. I will be showing you how I dealt with this problem and how this can help streamline some workflow. Let's get started.

A docker image contains all that a containerized code needs to run including a command to get the process to begin. The command for starting the main process in the container is called the Entrypoint. This is shown in the Dockerfile below. This single command doesn't allow for performing any tasks before starting the main process. These tasks range from running migrations on a database or setting up the necessary infrastructure required by the code. That sucks but there's a solution to this. This is by using a shell file.

FROM node:latest

WORKDIR /usr/app

COPY package.json .

COPY package-lock.json .

RUN npm install

COPY src .

EXPOSE 8080

CMD ["node", "/dist/index.js"]

The entry point command could run a shell script that does all that we want. For my use case, I had very few things to do. Create a database if it does not exist, run any available migration, and then start the NodeJS server. I created a script called startup.sh containing the following

#! /bin/bash

npx db-migrate db:create testdB

npx db-migrate up

node /dist/index.js

Line 1 creates the database using db-migrate.

Line 2 runs any available migration.

Line 3 starts the server.

Here are several things to note.

The commands to be run can be anything at all. Any command to prepare or perform some important task can be performed here.

I ran the command using

npx. Tread carefully withnpx. This might fail due to your machine's inability to access the internet.npxor internet depending commands fall in this category. This leads to the third problem.With this script, one cannot guarantee that all the commands run. We usually need all to be successful. Adding

set -eto the beginning of the script becomes a great idea. This is to stop any continued execution if any command fails. Depending on what you want to run, this might be a good or bad idea.

Our initial dockerfile becomes as shown below.

FROM node:latest

WORKDIR /usr/app

COPY package.json .

COPY package-lock.json .

RUN chmod +x startup.sh

COPY startup.sh .

RUN npm install

COPY src .

EXPOSE 8080

CMD ["startup.sh"]

With this, we are able to use one command to run many commands in our containers. This is interesting as it opens many doors of flexibility and possible failure. Use with care. I hope you have learned one or two things.

Bonus

Containers are known to be only able to run a single process. What If we run a command in the background using the & sign and continue to run our main task. Will it break the Docker universe? Try it out and let me know your findings.